If 2025 felt busy but somehow unproductive, you’re not alone.

Across Europe, many radio organisations spent the past year discussing digital transformation in great detail. There were steering committees, strategy decks, and carefully articulated roadmaps about podcasts, platforms, and audience-first thinking. The intent was genuine, the energy real, and the people involved often highly competent.

And yet, as 2026 begins, the uncomfortable reality is that very little has materially changed.

The same shows dominate the schedule. Digital products remain marginal. On-demand listening is still treated as an extension of the linear world rather than a different logic altogether. Not because people don’t care, but because most organisations got stuck in planning mode.

That is why 2026 risks looking a lot like 2025, unless one thing changes.

Not the strategy itself. Not the organisational chart. But the question you ask.

The question that kills momentum

At the start of the year, many leadership teams instinctively ask: “What should our strategy for digital be in 2026?” It sounds reasonable, even necessary. But in practice, it almost always leads to the same outcome: months of alignment work, incremental refinements of existing plans, and very little that actually reaches an audience.

The problem isn’t strategy per se. The problem is that this question pushes organisations into abstraction. It rewards coherence on paper rather than learning in the real world.

A far more productive question is simpler, and slightly uncomfortable: “What is the first thing we are going to test in Q1?”

That question forces specificity. You can’t hide behind ambition, intent, or budgets. You have to decide what will be built, for whom, and what you expect to learn. It shifts the conversation from vision to execution, from consensus to evidence.

Why planning feels reassuring, and why that’s dangerous

Planning feels safe because it looks controlled. It produces documents, milestones, and a sense of professionalism that organisations are deeply comfortable with.

I saw this clearly with a large regional radio group last year. Over several months, they produced a thoughtful digital audio platform strategy, complete with phased delivery, governance models across stations, and a multi-year horizon. The work was solid. Everyone involved acted in good faith.

But by early autumn, nothing had launched.

Each delay had a rational explanation: infrastructure first, then team alignment, then budgets, then board approval. None of these steps was wrong in isolation. Together, they created paralysis.

At the same time, one of their presenters began posting short clips on TikTok, without a roadmap or formal approval. Six months later, the account had tens of thousands of followers and a far better understanding of what resonated digitally than the organisation had gained from months of planning.

This contrast is uncomfortable, but instructive. Planning signals seriousness. Testing signals risk. Yet in a digital environment, avoiding small risks almost guarantees a larger one later.

What testing actually looks like in practice

Testing is often misunderstood, especially in organisations shaped by decades of broadcast logic. It does not mean acting randomly, nor does it mean abandoning standards.

It means narrowing the scope of ambition so that something can be shipped quickly and evaluated honestly.

In many organisations, months are spent debating what should work digitally. These discussions are usually driven by experience, intuition, and well-intentioned beliefs about the audience. The problem is not that this intuition is wrong, but that it remains untested.

A more productive alternative is to take a single assumption – for example, that listeners want longer, more in-depth versions of interviews – and confront it with reality. Release a small number of episodes in a clearly defined format, then observe what happens: do people start listening, do they finish, do they come back?

One approach stretches learning over months of discussion. The other compresses it into a few weeks of real-world evidence. And in a digital environment where habits evolve quickly, progress comes from fast, validated learning rather than confidence in what should work.

What could realistically be shipped before the end of Q1

Most organisations underestimate how much they can learn in a short period if they focus.

Within a single quarter, teams can move well beyond isolated experiments and generate real evidence about what works digitally. Not theoretical insights, but validated learning: signals about audience behaviour, formats, distribution, and value creation that can actually inform decisions.

In practice, this might mean testing whether a flagship show genuinely works as a podcast, exploring a digital-only format before exposing it on air, or distributing one programme aggressively across platforms to understand where discovery really happens. Others might validate content or monetisation ideas through targeted audience tests rather than internal debate.

The key difference is not what is tested, but how fast learning happens.

When experimentation is structured – with clear hypotheses, tight feedback loops, and explicit decision points – meaningful insights can be produced in a matter of weeks. This is precisely why we run Innovation Sprints with our clients: in twelve weeks, teams generate ideas and move from assumptions to evidence, with enough proof to decide whether to scale, pivot, or stop.

Not every test succeeds. Some ideas are discarded quickly. That is the point. What matters is that by the end of Q1, the organisation knows far more about its audience and its digital potential than it did at the start of the year, and has something concrete to show for it.

Crucially, initiatives like these cost less (financially and politically) than another year spent refining strategy documents. But they move learning forward at a completely different speed.

The compounding advantage of learning early

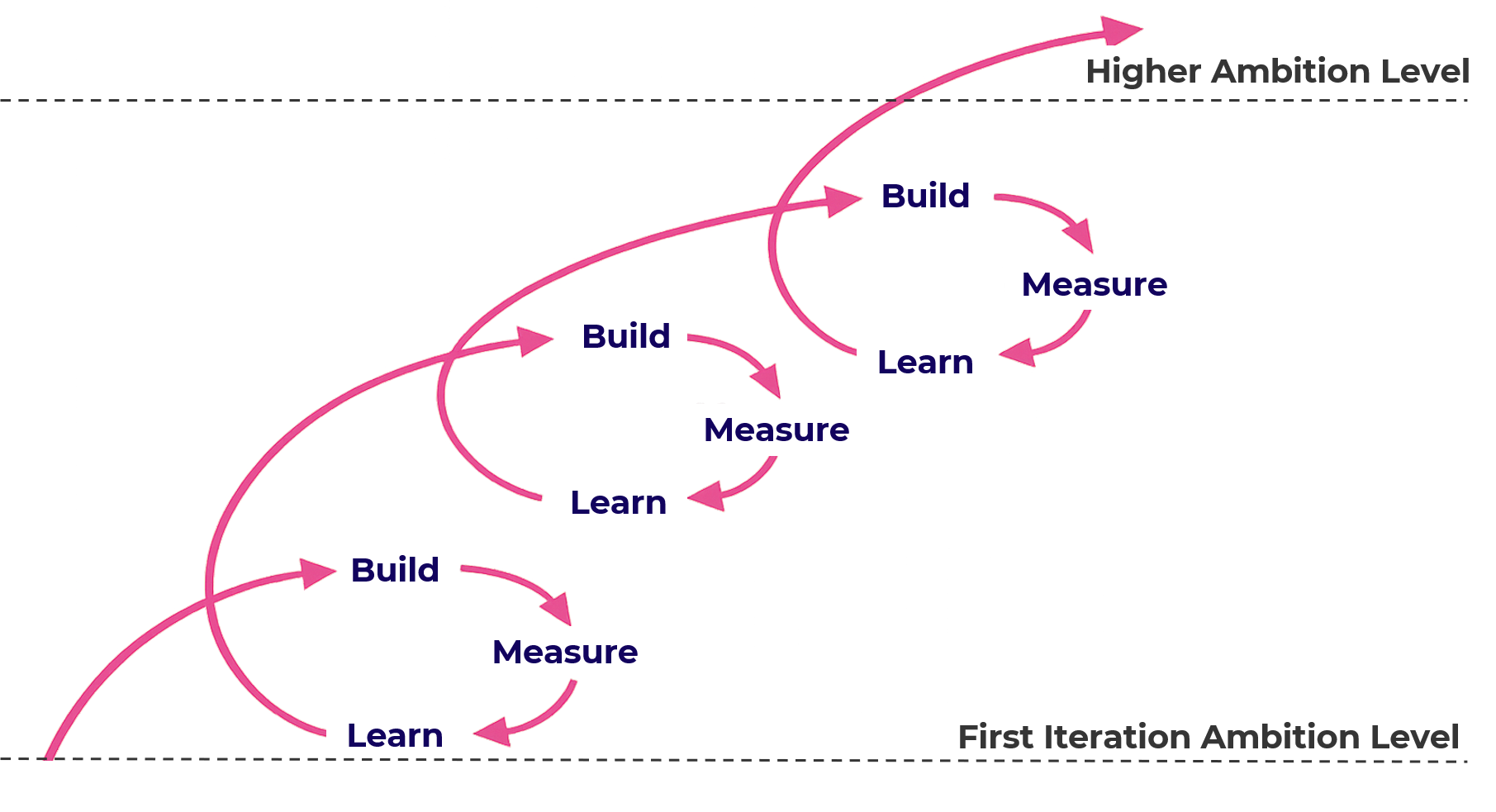

The real advantage of early testing is not that the first experiment succeeds. It is that each test earns the right to aim slightly higher next.

An organisation that runs some meaningful tests in Q1 enters Q2 with something rare: real data about audience behaviour. That data informs the next iteration, which is often a little more ambitious than the first. By mid-year, several assumptions have been validated or discarded. By the end of the year, a handful of initiatives are no longer theoretical; they are proven, refined through successive tests, and ready to be integrated.

What matters is not that individual tests are spectacular. Most aren’t. Some fail outright. That is normal. What matters is that learning compounds. Each cycle reduces uncertainty, sharpens focus, and increases confidence in what can reasonably be attempted next.

The paradox is that organisations that start with deliberately modest tests often reach higher ambitions faster than those that try to start big. By contrast, teams that delay experimentation tend to arrive at similar conclusions much later, and at significantly higher cost.

Where the real risk actually lies

A failed three-week test rarely has consequences beyond mild disappointment. Audiences barely notice. Brands remain intact. Teams move on.

The greater risk is far quieter: another year spent discussing and aligning internally while audience behaviour continues to evolve elsewhere. That gap is difficult to close once it becomes visible in the numbers.

Most legacy media organisations did not decline because they made bold but unsuccessful bets. They declined because they waited too long to make any meaningful ones.

Strategy documents do not prevent that outcome. Learning does.

A simple choice for 2026

As the year begins, radio leaders face a choice that is less strategic than it appears.

One path involves refining last year’s plans, securing consensus, and delaying execution until conditions feel ideal. The other involves committing to a small number of concrete tests, accepting uncertainty, and learning quickly.

Both paths can be justified. Only one produces momentum.

By now, most of the industry understands the imperative of digital transformation. The data is well known. The direction of travel is no longer in doubt.

What remains open is whether 2026 will be spent planning or testing.